11.11.2017 Update: For the most up-to-date SLI benching results using Core i7-8700K at 4.6GHz, please see The GTX 1070 Ti SLI Performance Review vs. the GTX 1080 Ti – 35 Games Tested.

What’s better than a GTX 1080 Ti, the fastest video card in the world? Two of them in SLI! But is it worth the extra $700 for the second GTX 1080 Ti plus the cost of a HB bridge for added performance?

This follow up to BTR’s launch evaluation of the GTX 1080 Ti is going to test the same 25 modern PC games at 4 resolutions – 1920×1080, 2560×1440, 3440×1440, and 3840×2160 – to see how well SLI’d GTX 1080 Tis scale. We have tested SLI and CrossFire before with rather mixed results. We concluded from our last evaluation of the TITAN X vs. GTX 1070 SLI: “It is pretty clear that CrossFire or SLI scaling in the newest games, especially with DX12, are going to depend on the developers’ support for each game requiring a mGPU gamer to fall back to DX11. We also note that recent drivers may break multi-GPU scaling that once worked. Even a new game patch may affect multi-GPU game performance drastically.”

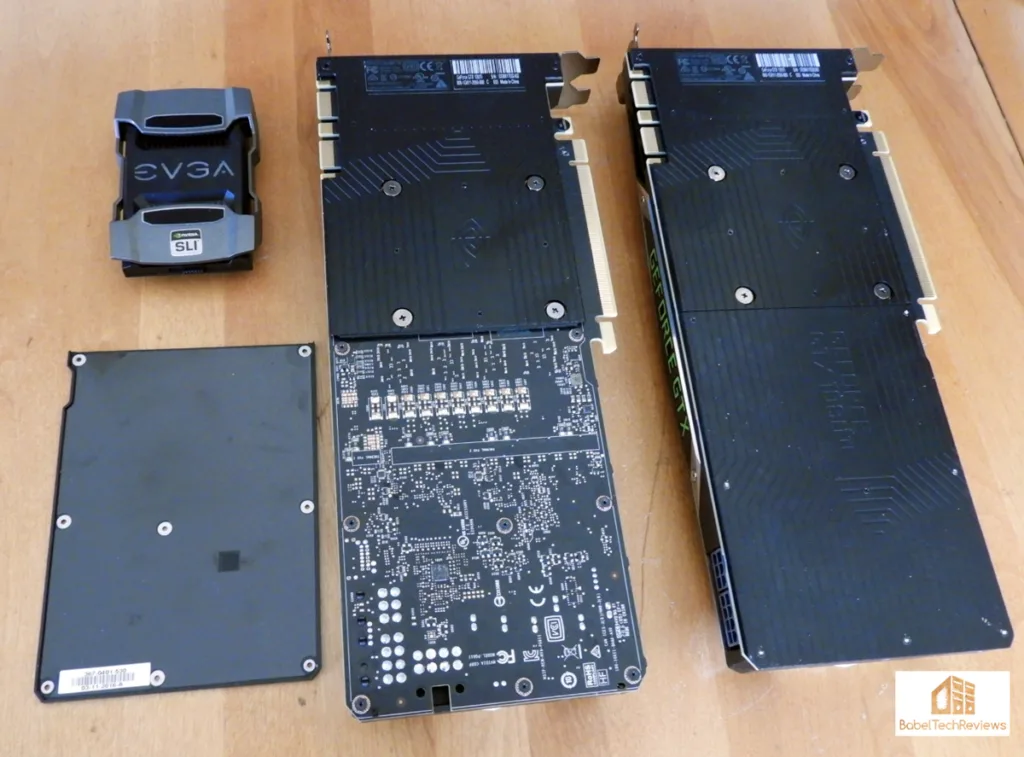

We were able to borrow a second GTX 1080 Ti and we ran the same benchmarks as in the GTX 1080 Ti launch evaluation last Thursday. We removed the backplate from the bottom GTX 1080 Ti so that the top card could intake air more easily and we used our EVGA HB SLI bridge.

The EVGA HB SLI Bridge

No longer do the flexible ribbon SLI bridges bundled free with SLI motherboards carry enough bandwidth for Pascal SLI. Now High Bandwidth (HB) SLI bridges are necessary to support the bandwidth for high display resolutions. We received a HB SLI bridge from EVGA which enabled us to run these benchmarks.

Our HB bridge is “single spacing” and it also features a RGBW switcher to feature Green, Blue, White or (even) Red.

Here’s a closer look.

Here is the other side:

Here is GTX 1080 Ti SLI installed and lit up. Since we use the “zero spacing” configuration, we removed the bottom card’s backplate so the top card could intake air more easily. It made a few degrees improvement to the hot-running SLI’s GTX 1080s.

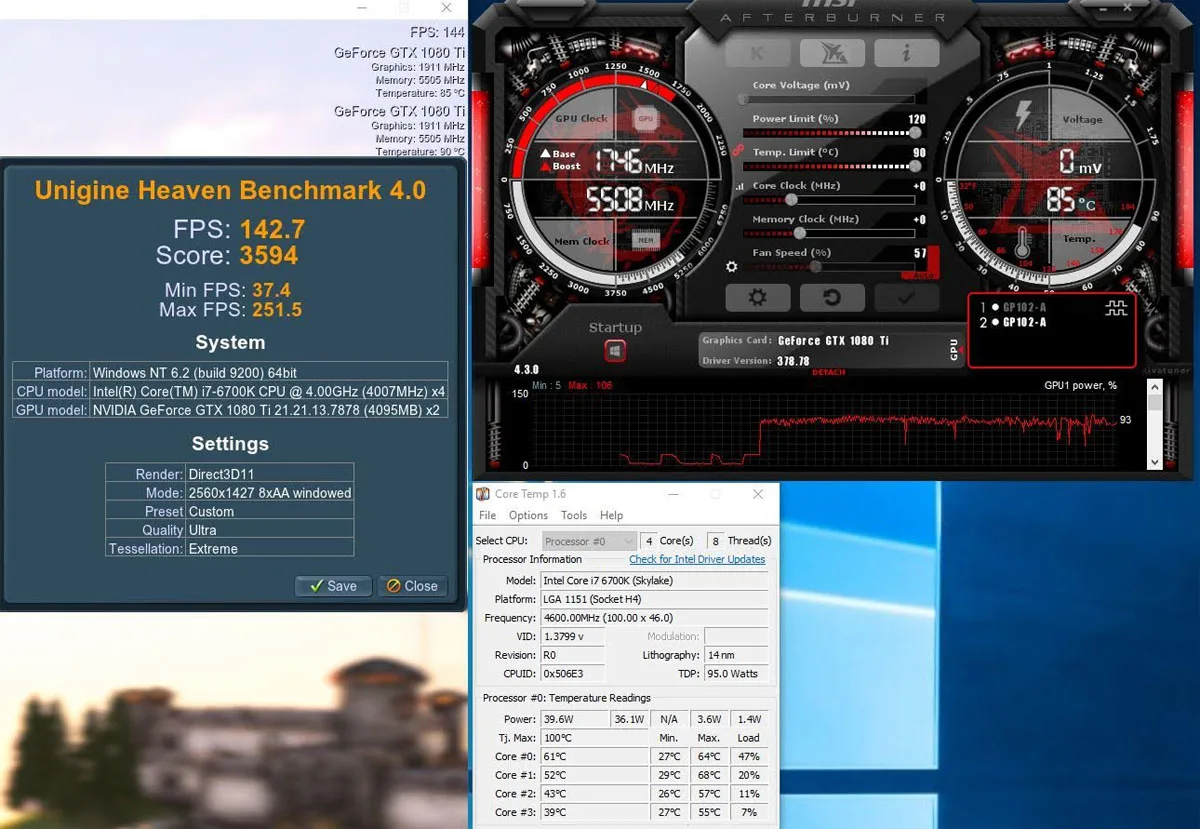

Here is GTX 1080 Ti SLI installed and lit up. Since we use the “zero spacing” configuration, we removed the bottom card’s backplate so the top card could intake air more easily. It made a few degrees improvement to the hot-running SLI’s GTX 1080s. Temperatures of both cards generally stayed in the mid-80s C and occasionally neared 90C.

Temperatures of both cards generally stayed in the mid-80s C and occasionally neared 90C.  Better cooling would be helpful as it is very likely that at least one of the GTX 1080 Tis throttled pretty regularly in our very warm (Summer-like) test room.

Better cooling would be helpful as it is very likely that at least one of the GTX 1080 Tis throttled pretty regularly in our very warm (Summer-like) test room.

Let’s check out the test configuration.

Test Configuration – Hardware

- Intel Core i7-6700K (reference 4.0GHz, HyperThreading and Turbo boost for all 4 cores are locked on to 4.6GHz by the motherboard’s BIOS).

- ASRock Z7170M OC Formula motherboard (Intel Z7170 chipset, latest BIOS, PCIe 3.0/3.1 specification, CrossFire/SLI 8x+8x)

- HyperX 16GB DDR4 (2x8GB, dual channel at 3333MHz), supplied by Kingston

- Founders Edition GTX 1080 Ti 11GB, reference clocks, supplied by NVIDIA

- 2TB Seagate FireCuda 7200 RPM SSHD

- EVGA 1000G 1000W power supply unit

- EVGA CLC 280 watercooler supplied by EVGA

- Onboard Realtek Audio

- Genius SP-D150 speakers, supplied by Genius

- Thermaltake Chaser MK-1 full tower case, supplied by Thermaltake

- ASUS 12X Blu-ray writer

- ACER Predator X34 GSYNC 3440×1440 display, supplied by NVIDIA

- Monoprice Crystal 4K 28″ 3840×2160 display.

Test Configuration – Software

- All games are patched to their latest versions at time of publication.

- Windows 10 64-bit Home edition. Latest DirectX and fully updated.

- Highest quality sound (stereo) used in all games.

- VSync is off in the control panel.

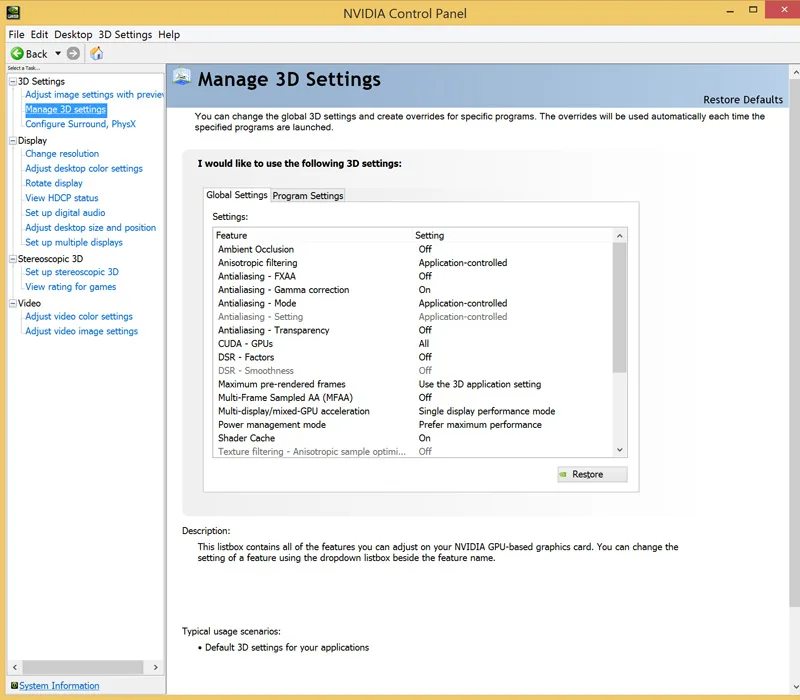

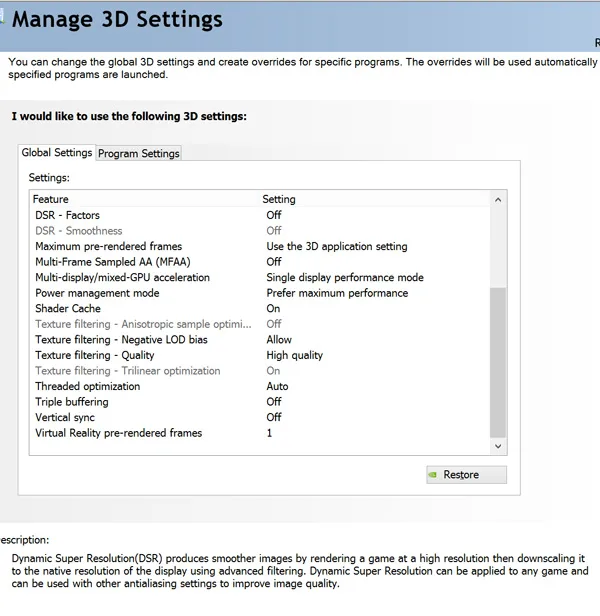

- NVIDIA’s GeForce GTX 1080 Ti 378.78 launch drivers. High Quality, prefer maximum performance, single display.

- MSI Afterburner used for setting the GTX 1080 Tis’ power limit/temps to maximum and linking their clocks.

- OCAT/PresentMon for DX12/Vulkan benching

- Fraps for benching DX11 games

Synthetic Benchmarks

- Firestrike – Ultra & Extreme

- Time Spy DX12

- VRMark

DX11 Games

- Crysis 3

- Metro: Last Light Redux (2014)

- Grand Theft Auto V

- The Witcher 3

- Fallout 4

- Assassin’s Creed Syndicate

- Just Cause 3

- Rainbow Six Siege

- DiRT Rally

- Far Cry Primal

- Call of Duty Infinite Warfare

- Watch Dogs 2

- Resident Evil 7

- For Honor

- Ghost Recon Wildlands

Vulkan Games

- DOOM

DX12 Games

- Tom Clancy’s The Division

- Ashes of the Singularity

- Hitman

- Rise of the Tomb Raider

- Total War: Warhammer

- Deus Ex Mankind Divided

- Gears of War 4

- Battlefield 1

- Sniper Elite 4

Nvidia’s Control Panel settings:

We used MSI’s Afterburner to set the GTX 1080 Tis’ Power and Temperature targets to their maximum.

Calculating Percentages

There are two methods of calculating percentages. One is the “Percentage Difference” that we originally used to compare the GTX 1080 versus the TITAN X, and the other is “Percentage Change” which we are using now to show the performance increase of GTX 1080 Ti SLI over a single GTX 1080.

For the percentage change, we mean the change in framerates between the GTX 1080 Ti and the GTX 1080 Ti divided by the absolute value of the original value in fps, multiplied by 100.

Percentage change may be expressed by the algebraic formula: ( ΔV / |V1| ) * 100 = ((V2 – V1) / |V1|) * 100

Let’s head to our performance charts.

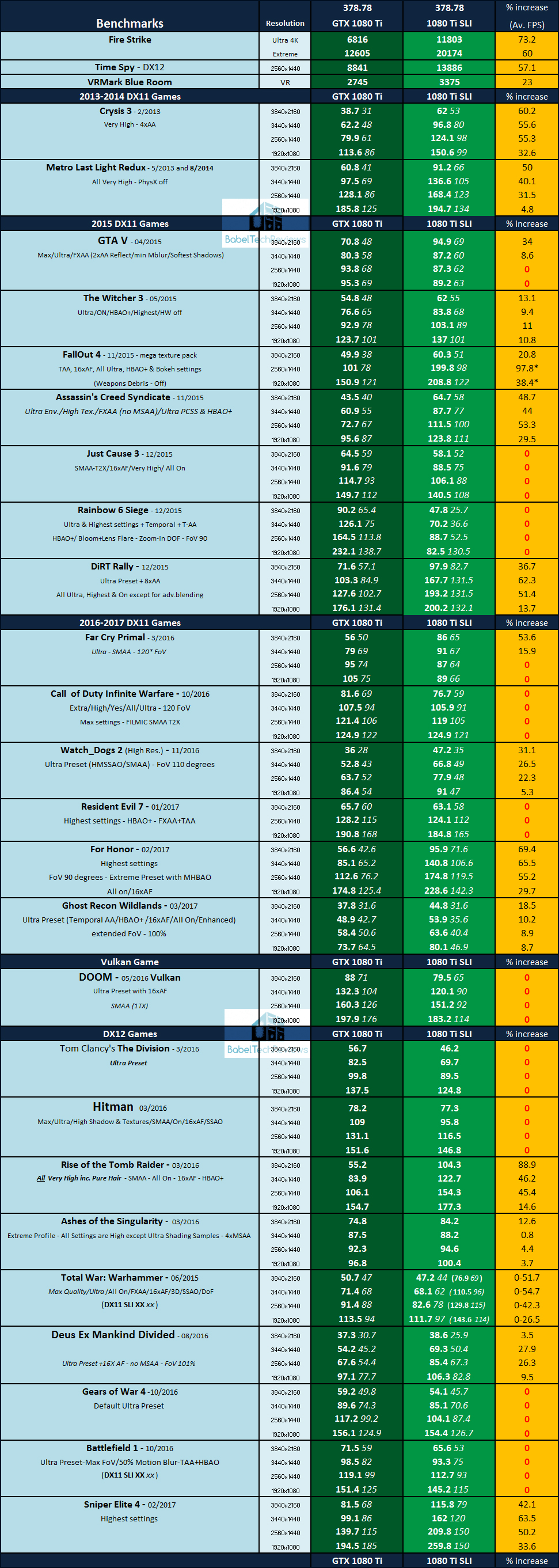

Performance summary charts

Here are the summary charts of 25 games and 3 synthetic tests. The highest settings are always chosen; DX12 is picked above DX11 where available, and the settings are ultra or maxed. Specific settings are listed on the performance charts. The benches were run at 1920×1080, 2560×1440, 3840×1440, and at 3840×2160.

All results, except for FireStrike, Time Spy, and VRMark show average framerates and higher is always better. Minimum framerates are shown next to the averages when they are available, but they are in italics and in a slightly smaller font. The GTX 1080 Ti performance results are in the first column and GTX 1080 Ti SLI results are shown in the second column. The 3rd column shows the percentage increase between the two configurations – “0” represents no scaling (or even negative scaling which becomes zero when SLI is disabled), while any number result is the SLI performance increase over a single GTX 1080 Ti.

Out of the 25 games that we tested, 9 do not scale at all, 2 more games have mixed scaling results depending on resolution, and one more game (TW Warhammer) needs to be played in DX11 as it scales negatively in DX12. On top of this, Fallout 4 has serious issues with its engine when it is pushed past 60 FPS, so SLI’d GTX 1080 Tis are only useful for 3840×2160.

We also note that GTX 1080 Ti SLI is overkill for 1920×1080 as it generally scales rather poorly compared with scaling at higher resolutions. Ideally, a gamer would pick GTX 1080 Ti SLI for 4K resolution. The games that benefit will sometimes have some dramatic performance increases, often from 20% to sometimes nearly 90%.

DX12 games appear to have some real issues with SLI support and it has to be implemented by the developer for it to work at all. In fact, Ashes of the Singularity supports DX12 multi-GPU but requires that SLI (and CrossFire) be turned off in the control panel or you will get some very mixed results.

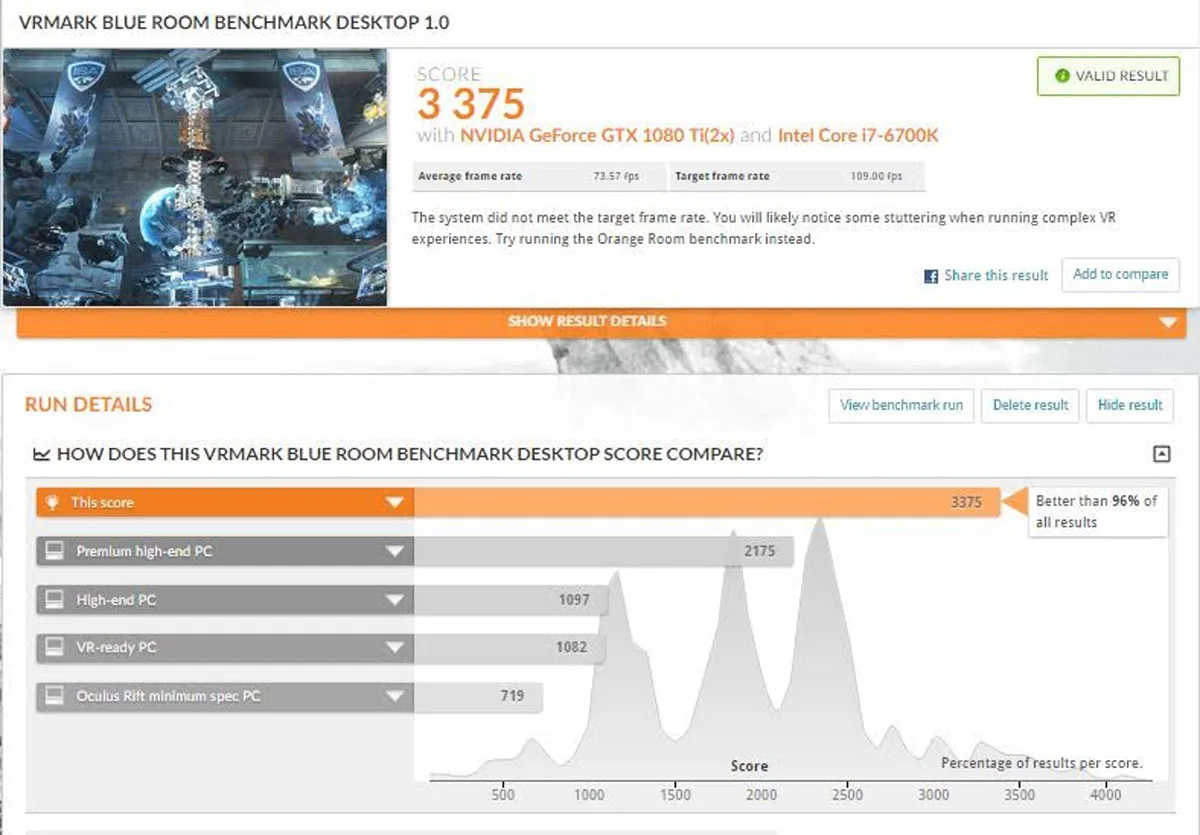

VR

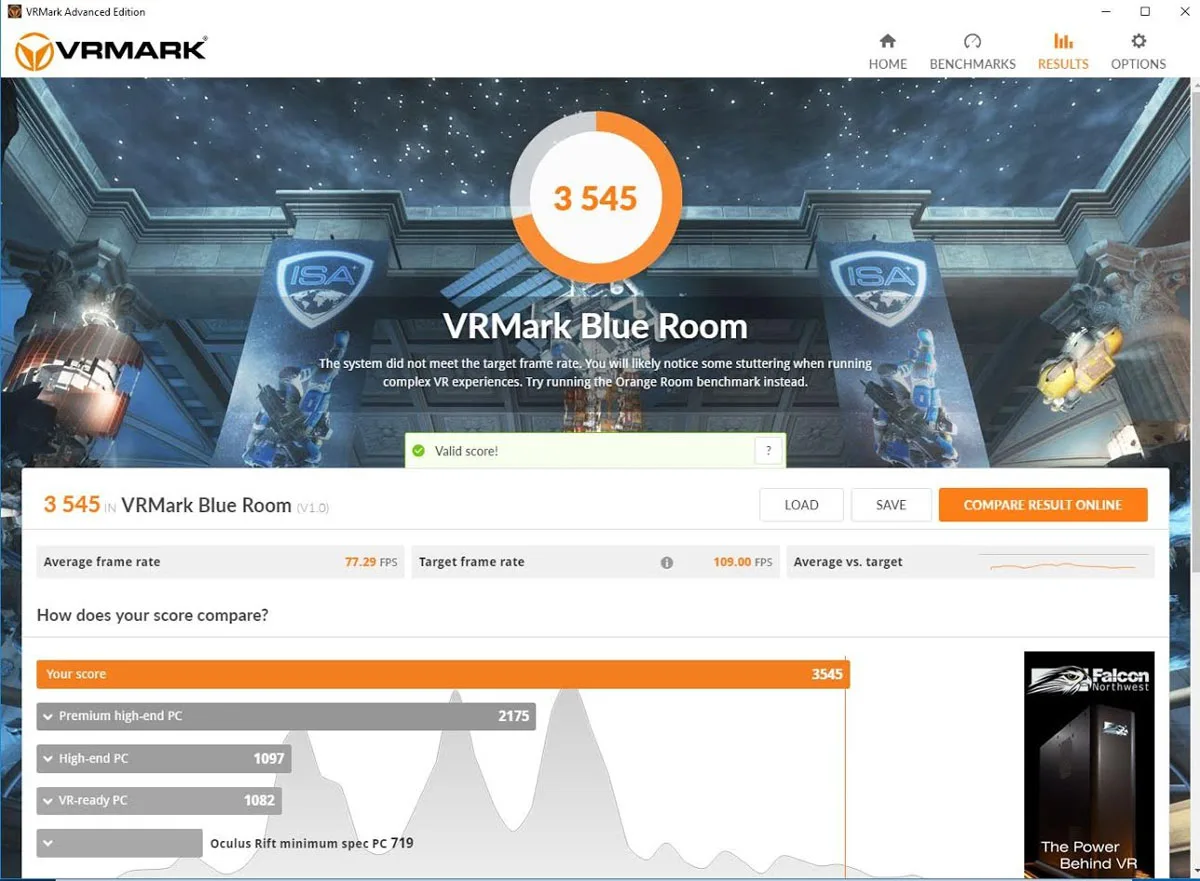

We also ran Futuremark’s VRMark Blue Room which is designed to test future VR games. It appears that SLI does not scale particularly well with VR yet at only 23%, and even overclocked +120MHz on the cores and +400MHz on the memory, our pair of GTX 1080 Tis are insufficient for running the Blue Room test adequately even though BTR’s PC is in the top 3% of PCs tested!

Let’s head for our conclusion.

Conclusion

This has been quite an enjoyable exploration for us in comparing GTX 1080 Ti against GTX 1080 Ti SLI. SLI scaling is good performance-wise in mostly older games and where the devs specifically support SLI in newer and DX12 games.

The $699 GTX 1080 Ti is certainly expensive but it stands alone as the world’s fastest gaming GPU, even ahead of the $1200 TITAN X. However, SLI’d GTX 1080 Tis are out of reach of most gamers at over $1400. It is pretty clear that CrossFire or SLI scaling in the newest games, especially with DX12, are going to depend on the developers’ support for each game requiring a SLI gamer to occasionally fall back to DX11. We also note that recent drivers may break SLI scaling that once worked and even a new game patch may affect SLI game performance dramatically.

The GTX 1080 Ti along with the TITAN X are ideal cards for 4K but if a gamer wants to enable totally maxed out settings, sometimes only SLI’d GTX 1080 Tis may suffice.

The Verdict:

- If you want the fastest video card configuration available today, GTX 1080 Ti SLI at $1400 is in a class completely by itself. But if you play the very latest games on Day 1 and rarely revisit your games, SLI may not be your best choice.

- Not every game will scale with SLI and many DX12 games do not scale at all. If you have a large library, revisit your older games often, and don’t mind waiting for a SLI profile, then GTX 1080 Ti SLI may well be your best choice for the very fastest video card gaming configuration that money can buy.

Stay tuned, there is a lot more coming from us at BTR. In our continuing GTX 1080 Ti series, we will test its overclocking abilities versus the overclocked TITAN X. And soon, we will bring you the benchmarks of the new AMD Ryzen CPU platform using these same 25 games versus our Intel platform.

Happy Gaming!

Comments are closed.